Built for Control

Four new capabilities that put you in charge of how Cyréna thinks, what she knows, and when she acts.

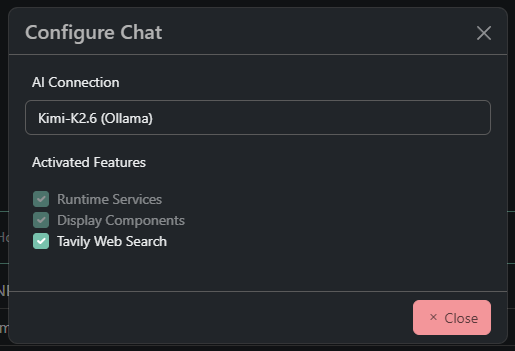

Feature Activation

NewThe model only knows what it needs to know.

Feature Activation lets you enable or disable tools and capabilities per chat. When a feature is off, it does not just hide — it ceases to exist for the model entirely. No confusion, no accidental tool use, no noise. Cyréna operates with focus on exactly what your current task requires.

- Enable or disable tools per chat

- Disabled features are invisible to the model

- No accidental tool calls or confusion

- Surgical precision for every task

Dynamic System Prompts

NewThe right instructions, at the right time.

As features activate and deactivate, Cyréna's instruction set updates automatically. Cyréna operates under the most relevant constraints for your current stack — Angular prompts for Angular work, firmware rules for firmware work. No static one-size-fits-all prompt. The context adapts with you.

- Prompts update as features change

- Stack-specific constraints automatically applied

- No static, bloated system prompt

- Context that adapts to your current task

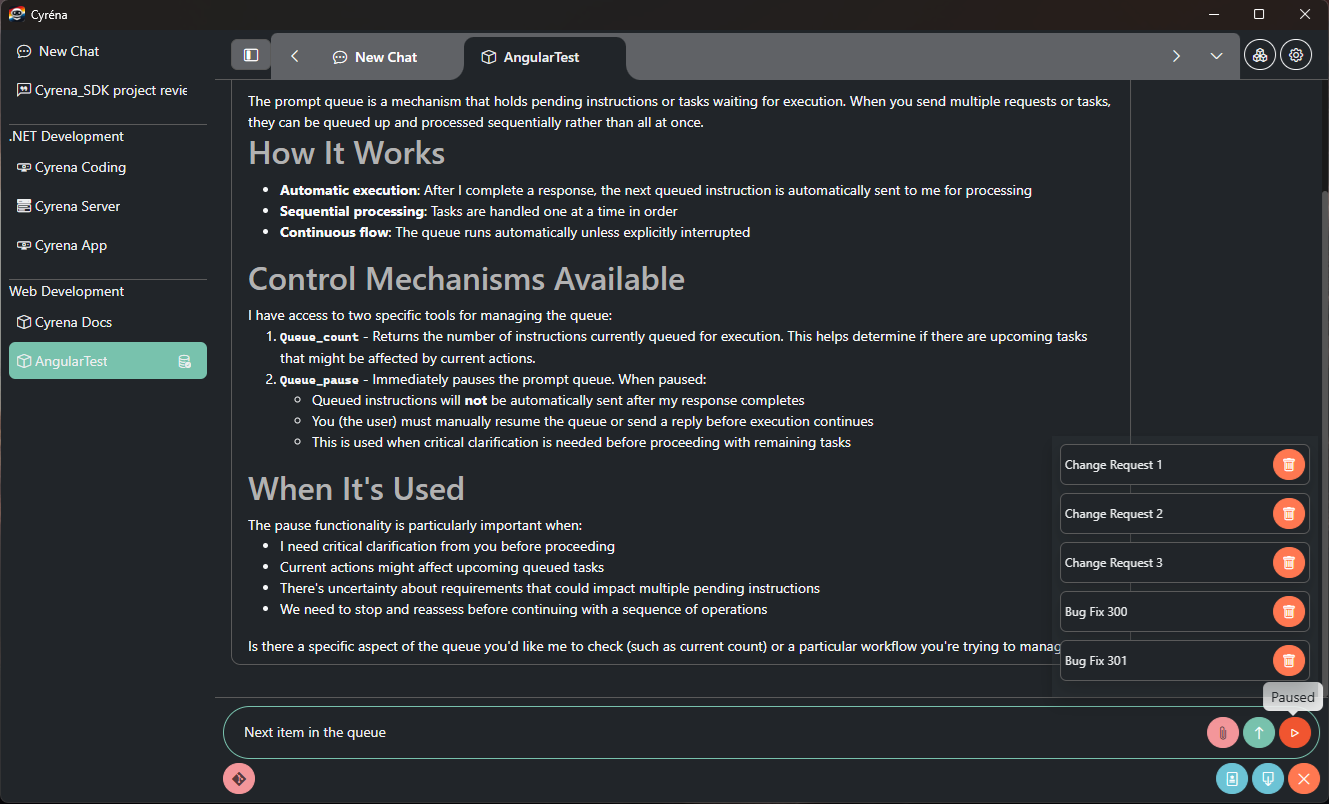

Prompt Queuing

NewLoad up your tasks. Go have a coffee.

Queue a sequence of instructions and let Cyréna work through them automatically. Each response completes before the next instruction fires. If something critical comes up mid-queue, Cyréna pauses and waits for your input before continuing. You stay in control without staying at your desk.

- Queue multiple instructions in sequence

- Each response completes before the next fires

- Auto-pause on critical input required

- Work through tasks while you do something else

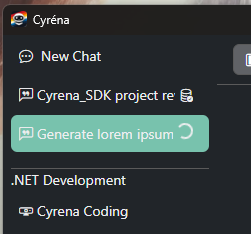

Chat Status

NewAlways know what's happening, at a glance.

Cyréna shows the live status of every chat directly in the sidebar. See which chats have context loaded and ready, which are actively working, and which are idle — without switching between them. No more wondering if the AI is still running or if a chat needs to be reopened.

- Live status in the sidebar for every chat

- Unloaded — idle, context loads on open

- Loaded — context in memory and ready

- Working — AI is actively processing